In the previous post, we covered the benefits of moving to the cloud for scale. In this post, we will look at some best practices to build applications for the cloud.

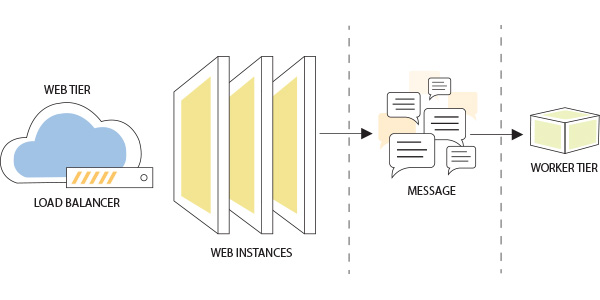

1. Use messaging to decouple components

One of the important principles of scalable application design is to implement asynchronous communication between components. To start with you can break down the application into multiple smaller services and deploy a message queue for communication between these services. A simple example of this would be to have your application’s Web instances put messages into a queue which are then picked by worker instances.

Such a mechanism is very effective for processing user requests that are time consuming, resource intensive, or depend on remote services that may not always be available.

Keeping the communication asynchronous allows the system to scale much farther than if all components were closely coupled together. By increasing compute resources for the workers, larger workloads can be disposed of quickly and in parallel. From a user experience standpoint, there are no interruptions or delays in the activity flow improving the user’s perception of the product.

Most importantly, this allows the web tier of the application to be lightweight and therefore support more users per instance of compute that runs in the cloud.

2. Be Stateless

In order to scale horizontally, it is important to design the application back-end such that it is stateless. Statelessness means that the server that services a request does not retain any memory or information of prior requests. This enables multiple servers to serve the same client. By being stateless (or offloading state information to the client), services can be duplicated and scaled horizontally, improving availability and catering to spikes in traffic by scaling automatically.

REST or Representational State Transfer is a good way to architect back-end services. REST lends itself nicely to a stateless protocol and adhering to the principles of REST provides rich integration possibilities as it is based on the well-known HTTP protocol. Good quality clients to use the protocol are available in all languages, making access to the API simple and open regardless of client language preference.

3. Cache aggressively

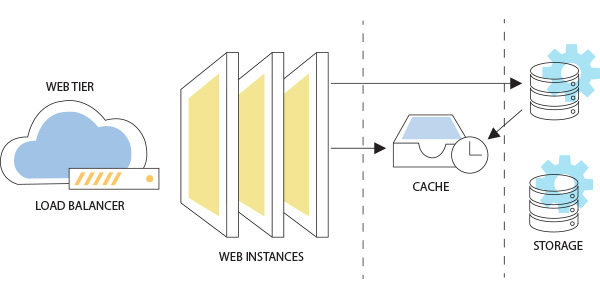

Caching improves performance by storing recently used data for immediate reuse. Application throughput and latency are typically bound by how quickly data and context can be retrieved, shared and updated. Add cache at every level from the browser, your network, application servers and databases. You can implement caching by leveraging Content Delivery Networks (CDN) especially for static data or by using caching solutions like Redis and Memcached. Cache aggressively in order to significantly increase the application’s performance and its ability to scale.

Before adding elements to a cache, assess the following 2 things:

- How often is this data accessed?

- How often does this data change?

If data is accessed very often and changes rarely, then this type of data is a prime target to be cached.

4. Expect Transient failures

A large cloud application spans many compute containers (VMs), Messaging services (Queues), Databases and other services. Although many managed services on the cloud provide excellent availability, it is still possible that failures may happen.

Transient failures may manifest themselves as temporary service outages (e.g unable to write to db) or a slowdown in performance (throttling).

Failing operations due to transient failures is not a good option. Instead, implement a strategy that retries the operation. Do not retry immediately because outages in most cases may last for a few minutes or more. Implement a strategy that delays the retry thus allowing the resource to recover. Implementing such smart retry policies will help handle busy signals or outright failures without compromising user experience.

5. Don't be chatty

Since a cloud application is spread across multiple component services, every interaction might involve connection overhead. This means going back and forth many times can be detrimental to performance and expensive in the long run.

Pay attention to how data is accessed and consumed. Wherever possible, process data in batches to minimize excessive communication. For user interfaces, try and cache objects that may need frequent access, or better still, query most data needed for a view upfront and cache on the browser side to improve performance and reduce round-trips.

When it comes to user interfaces, a pure object-based REST API may not be sufficient to provide all the data needed for screens (especially dashboards), upfront. Avoid the temptation to make multiple calls to the back-end. Instead, build an aggregation API whose data can be cached to improve performance.

Remember, on the cloud, innocuous looking functions may end up making a web-service call, adding overhead to your functionality.

Summary

Use these design principles while building your cloud application. These principles will help you build applications that can scale to meet demand by leveraging auto-scaling. By recognizing the pitfalls of the cloud environment and designing around it, you can build fault-tolerant apps that users are happy with.